The group was able to reverse engineer a machine vision system to generate what could be described as its archetypal image of a "goldfish." It looked like a kind of orange blur against a blank background. Strangely, the same question was actually posed during Rhizome's Seven on Seven conference this year, by Adam Harvey, Mike Krieger, and Trevor Paglen. But how do you check that the network has correctly learned the right features? It can help to visualize the network's representation of a fork. We train networks by simply showing them many examples of what we want them to learn, hoping they extract the essence of the matter at hand (e.g., a fork needs a handle and 2-4 tines), and learn to ignore what doesn't matter (a fork can be any shape, size, color or orientation). It seems strange that Google researchers would even need to ask this question, but that's the nature of image classification systems, which generally "learn" through a process of trial and error.

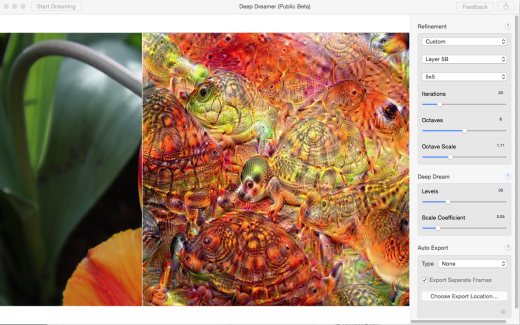

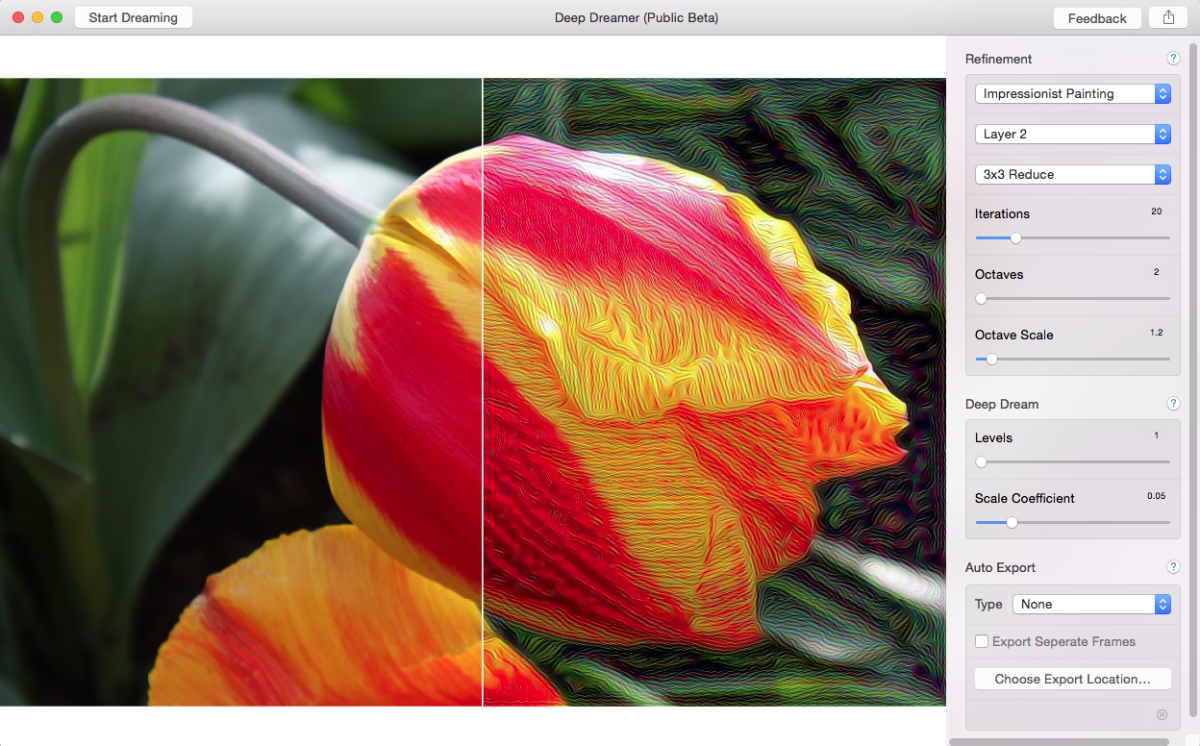

Antony Antonellis' A-Ha Deep Dream captures well the experience of encountering these unsettling images on the internet:Īntony Antonellis, A-ha Deep Dream (2015).īy way of recap: Deep Dream uses a machine vision system typically used to classify images that is tweaked so that it over-analyzes images until it sees objects that aren't "really there." The project was developed by researchers at Google who were interested in the question, how do machines see? Thanks to Deep Dream, we now know that machines see things through a kind of fractal prism that puts doggy faces everywhere. Participants in social media will by now be well aware of the artistic renaissance that has been underway since the release of Google's Deep Dream visualization tool last week. If this were a real Deep Dream image these would be dogs probably. They fucked in a feathery fractal in the time it took to render.Raphaël Bastide, Handmade Deep Dream (2015). Her face fanned into the carpet in bestial ecstasy as the puppies whorled with each thrust of his throbbing, dog-nosed proboscis. He tore his shirt the plane of his chest was rippled with rounded spirals of multicoloured muscle, each ghosting like a double-exposure. Her every darkness was a spiralling eye, a panting mouth. He found himself hard and glistening as a scarab. He turned his melting profile to her as she joined him on the floor and enveloped him in the purple curves of her thighs. She dropped the wrench and the premise of the water heater, never broken, having never once clanged. She kicked off her shoes and tip-toed towards him on delicate beaks.

Standing behind him, she unbuttoned her blouse, hands softening into fleshy paws as they moved across her body, revealing a pair of natural, bouncing Akita puppies. The muscles of his back ebbed as he worked. He dropped his tool kit and then to his knees in the shifting paisley of the carpet. He peeled himself from the door jamb and poured into the hall, clocking her décolletage. Pointed at the water heater: a sound, an ominous clang. He stood in the doorway in shirtsleeves, smelling of turpentine and musk. He rang, leaning into her strident doorbell, and she opened only then, feigning having travelled some distance from a calamity across the apartment. She kicked loose screws out from under the sink. When the handyman came, he knocked twice, hard, without hesitation. The first thing the internet used it on was porn. The network, ‘trained’ on Google’s vast storehouse of image-search results, tends to interpret shapes as animals, turning nearly any image into a hallucinatory, formless array of eyeballs, muzzles and beaks. In July 2015, Google released Deep Dream, a piece of software that uses a neural network to find and enhance patterns in images. Koufun, Underwater City, 2015, pornographic image from Brazzers website reworked with Deep Dream.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed